Ayyi is language and gui agnostic. This is one of the main reasons for running multiple processes. There is a small performance penalty to pay for this, but the advantages are:

- no developer is excluded.

- reduced time spent on recoding tasks for a particular gui toolkit. There still may be a need for applications that provide the UI conventions of a particular environment, but by sharing the backend, the development time is minimised. The 50/50 split between Gtk and QT/Kde is sometimes harmful. Competition and choice of UI is good, but choice of UI shouldn’t require two sets of Model libraries.

- increased resilience to segfaults.

- C++ is the standard for writing audio software, but there are still other languages in use, and allowing a wider selection enables developers to, for example, use more elegant languages where performance is not critical.

- processing load can be spread over multiple machines.

This approach is very common and is used by all major software vendors including Microsoft and Apple. It has many names and variations, and is sometimes referred to as Service Oriented Architecture or a Distributed Object system. However, the approach taken here is a practical one, forsaking some archictectural elegance for performance.

One way of thinking of the system is as a plugin system for the Song Model. It doesnt replace dsp plugins, which for performance reasons need to be in-process, but modifications to the Model are not only not processor intensive, but are too diverse in nature, to apply a restrictive api to.

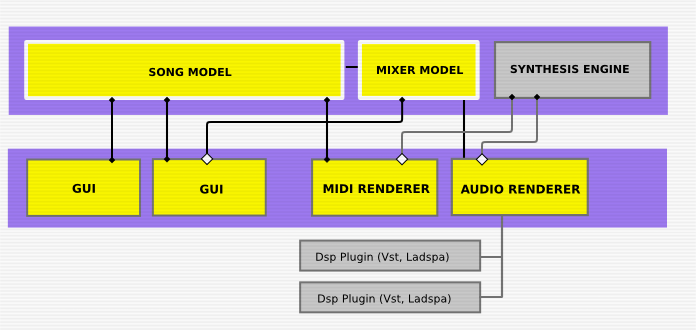

The diagram below shows a possible example of a working setup. Items in grey are participants that use existing non-Ayyi communication methods.

Multiple views can be connected to the Model. These would be a mixture of ‘sequencer’ interfaces, specialised editors (eg tracker style, beat detector, looper, etc), visualisation panels, and so on.

Multiple users can simultaneously connect to the Song Model, allowing collaborative working.

It is envisaged that there would normally be only one audio and midi renderer, and in fact they are sometimes implemented by the same application.